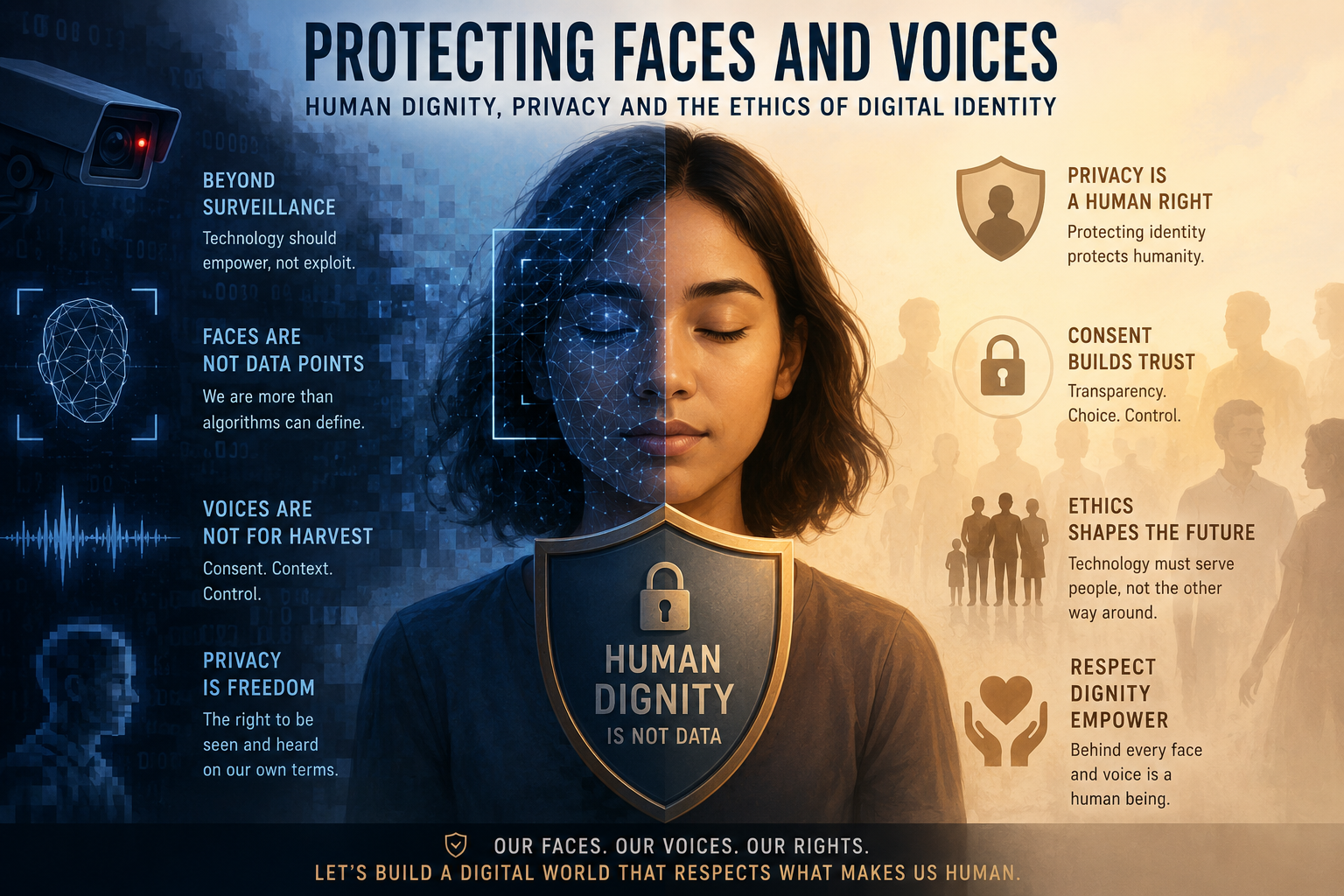

In an age where a single photo, voice note, or video can travel across the globe in seconds, the concept of identity has expanded far beyond physical presence.

Our faces and voices, once confined to personal interactions, are now digitized, stored, shared, and sometimes manipulated.

As we observe 2026 World Communications Week, this theme calls attention to a pressing ethical question: how do we protect human dignity in a world where digital identity is increasingly vulnerable?

*The Rise of Digital Identity*

Digital identity is no longer limited to usernames and passwords. It includes biometric data, facial recognition, voice patterns, fingerprints, and behavioral data such as online activity and communication styles.

While these technologies offer convenience and innovation, they also introduce new risks. Unauthorized data collection, surveillance, and identity theft can occur without individuals even realizing it.

The rapid growth of artificial intelligence has intensified these concerns. Deepfakes, for instance, can replicate a person’s face or voice with alarming accuracy.

This raises serious ethical issues: Who owns your likeness? Who has the right to reproduce your voice? And what safeguards exist to prevent misuse?

Human Dignity in the Digital Space.

At the heart of this issue lies human dignity, the inherent worth of every individual. When someone’s image or voice is used without consent, especially in misleading or harmful contexts, it undermines that dignity. Digital spaces must not become environments where people are reduced to data points or manipulated representations.

Respecting dignity means ensuring informed consent, transparency, and accountability. It also means recognizing that behind every digital profile is a real human being with rights, emotions, and a reputation that can be deeply affected by digital misuse.

Privacy as a Fundamental Right

Privacy is not just about secrecy; it is about control. Individuals should have the right to decide how their personal data is collected, used, and shared.

However, many digital platforms operate on models that prioritize data extraction over user protection.

Stronger data protection laws, ethical tech design, and user education are essential. People must be empowered to understand their digital footprint and take steps to protect it.

This includes using secure platforms, being cautious about sharing personal media, and advocating for better privacy standards.

*Ethical Responsibility of Technology Creators*

Developers, companies, and policymakers hold significant responsibility in shaping the digital landscape. Ethical considerations should be embedded in every stage of technological development, from design to deployment.

✅This includes:

Building systems that prioritize user consent

✅Implementing safeguards against misuse of biometric data

✅Ensuring transparency in how data is collected and processed

✅Creating mechanisms for accountability when harm occurs

Ethics should not be an afterthought but a foundation.

*A Collective Responsibility*

Protecting faces and voices is not solely the responsibility of governments or tech companies. It requires collective awareness and action. Educators, communicators, religious leaders, and everyday users all play a role in fostering a culture of respect and responsibility online.

As we reflect during World Communications Week, the challenge is clear: to build a digital world that enhances human connection without compromising human dignity. Technology should serve humanity, not exploit it.

*Conclusion*

Our faces and voices are deeply personal expressions of who we are.

In the digital age, protecting them is essential to preserving identity, dignity, and trust. By embracing ethical practices, strengthening privacy protections, and promoting digital responsibility, we can ensure that the future of communication remains both innovative and humane.

By Sr. Emmanuella Dakurah, HHCJ ( Catholic Sister communicators Network-Ghana, CASCON-GH)